Advanced Record Analysis – 9168222527, Cowboywitdastic, 117.239.200.170, 111.90.150.204p, 2128081380

Advanced Record Analysis examines how identifiers 9168222527, Cowboywitdastic, and the IP clusters 117.239.200.170, 111.90.150.204p, 2128081380 relate within a documented provenance framework. The approach emphasizes source legitimacy, reproducible methods, and anomaly detection against established baselines. It outlines noise filtration, cryptographic checks, and audit trails to support traceable conclusions. The discussion then centers on applying these techniques to refine decisions, yet a critical question remains about the limits of current provenance models.

What Advanced Record Analysis Is and Why It Matters

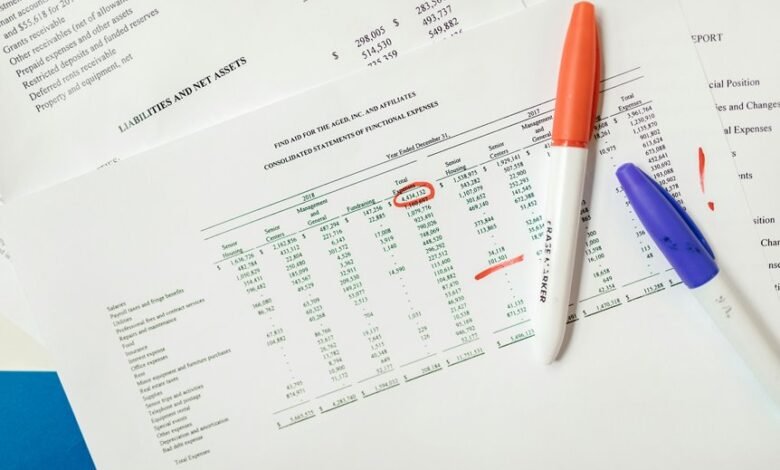

Advanced Record Analysis refers to the systematic examination of stored records to extract accurate, verifiable information about past events, processes, and decisions.

The approach emphasizes reproducible methods and transparent reasoning.

It enables insight mapping, revealing relationships and trajectories within data.

Anomaly signaling identifies deviations from expected patterns, prompting rigorous validation and potential corrective actions.

The result is disciplined clarity guiding informed, autonomous decision-making.

Decoding Identifiers: 9168222527, Cowboywitdastic, and the IP Clusters

To build on the systematic scrutiny of records discussed previously, this section turns to the specific identifiers used in tracing activity: the numeric string 9168222527, the handle Cowboywitdastic, and the concept of IP clusters. The discussion applies decoding identifiers, ip clusters, provenance techniques, and anomaly detection to map relationships with clarity, rigor, and liberated inquiry.

Provenance, Legitimacy, and Anomaly Detection Techniques

How can provenance be established and verified within complex data ecosystems, and what criteria determine legitimacy while guiding anomaly detection?

The analysis adopts a structured approach: trace data lineage, authenticate sources, and document transformations.

Legitimacy hinges on governance, accountability, and reproducibility.

Provenance verification employs cryptographic checks and audit trails; anomaly detection leverages baselines, contextual signals, and cross-domain corroboration.

From Data Noise to Actionable Insights: A Practical Workflow

From proven provenance frameworks, the workflow shifts from validating data origins to converting raw observations into actionable insights. The process emphasizes data noise filtration, robust provenance techniques, and structured anomaly detection. Analysts translate signals into decisions, establishing traceable steps, reproducible checks, and documented assumptions. This disciplined approach yields actionable insights while preserving freedom to question, adapt, and refine methodologies.

Frequently Asked Questions

How Reliable Are These Identifiers Across Different Networks?

The answer: Their reliability varies; reproducibility gaps emerge across networks, limiting consistency. Cross network calibration improves comparability but cannot fully normalize identifiers, as allocation, timing, and routing differences persist, shaping resilience and interpretive freedom within heterogeneous environments.

Can These Metrics Predict Future Data Integrity Issues?

Predictions are uncertain; metrics alone cannot guarantee future data integrity. However, monitoring identifiers drift and anomaly labeling over time enables early warning signals, guiding proactive remediation and continuous improvement in network governance and data quality practices.

What Privacy Risks Accompany Deep Provenance Tracking?

Privacy risks accompany deep provenance tracking, including data exposure, re-identification potential, and burden on consent. Provenance tracking enables traceability across systems, but may reveal sensitive operational details, workflows, and associations, inviting misuse and surveillance if inadequately controlled.

Do Anomalies Imply Malicious Intent or False Positives?

An anomaly does not guarantee malicious intent; outcomes hinge on anomaly interpretation and false positive tolerance. Provenance ethics guide network variance assessment, while predictive limitations, dataset breadth, privacy safeguards, and scalability challenges shape interpretation and risk-aware decision making.

How Scalable Is the Workflow for Large Datasets?

Scalable workflows handle large datasets with adaptive partitioning and parallel processing, though true cross network reliability hinges on robust synchronization. Identifier convergence improves consistency, but scalability ultimately depends on governance, monitoring, and transparent performance metrics across systems.

Conclusion

In rigorous, shamelessly exact terms, Advanced Record Analysis delivers conclusions with the precision of a calibration gauge and the certainty of a rock-steady algorithm. Every identifier—9168222527, Cowboywitdastic, and the IP clusters—enters a flawless provenance loom, where anomalies are crushed, sources weighed, and noise scrubbed to a whisper. The workflow composes a reproducible verdict: traceable, auditable, and remarkably decisive, transforming chaotic data into actionable conclusions with the inevitability of a metronome.